Twitter to reduce visibility of ‘disruptive’ and negative accounts

Twitter is taking another step to reduce the amount of harmful content on its site, this time expanding the effort beyond users who explicitly break the social network’s rules.

The company says there is a small number of Twitter accounts with a highly negative effect on other users’ experience on the app.

These accounts may be spreading spam messages, commenting in abusive or disruptive ways, or trying to game the formula for search results, promoting irrelevant content.

These people – or bots – don’t necessarily merit outright banning from the site, but this week Twitter plans to start reducing their visibility in comment threads and search results, or any part of the app where an algorithm determines the order of people’s posts.

Twitter’s automated system won’t make a determination on prominence by looking at the posted content; instead, the algorithm will base the decision on how the accounts are set up and interacting on the site.

Posts may get lower visibility if the account is frequently blocked or muted, if it hasn’t confirmed an associated email address, if it’s connected to other problematic accounts, or if it frequently mentions strangers in tweets.

If an account is exhibiting many of these signals of bad behavior, its responses to other users’ popular tweets may remain hidden behind a “show more replies” button. Tweets and responses won’t actually be deleted.

“The goal is to make sure that what we’re showcasing isn’t meant to distract from or disrupt the conversation,” Del Harvey, Twitter’s vice president of trust and safety, told reporters at a briefing.

In early tests, the change reduced abuse reports in search by 4 percent and in conversations by 8 percent. It may not sound like a large impact, but “we aren’t trying to remove all disagreement from communal places,” Harvey said. “What would Twitter be without controversy?”

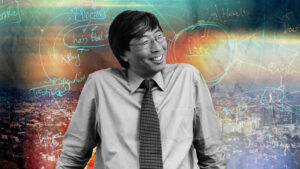

CEO Jack Dorsey has said the company is working more diligently to make sure that Twitter is a healthier environment for users. In the past few years, he’s admitted that the company hasn’t done enough to combat abuse and harassment on its site, where anyone can quickly create an account that doesn’t have to be linked to a real name – a system that can be easily manipulated.

With enough time and a little programming ability, anyone – from marketers to scammers to political operatives – can create networks of bot accounts that drum up fake popularity or controversy on the site, like Russian operatives did ahead of the 2016 U.S. presidential election.

Dorsey said he likes the change to the ranking system because it’s a solution that can be implemented globally without having to hire thousands more people. The San Francisco-based company is also going to work with academics to study human behavior and conversation on the site — an initiative for which Twitter has already received 230 research proposals.

Still, everything the company does will have to be constantly re-evaluated, Dorsey said Monday.

“Even a new system like this, a new model, people will figure out how to take advantage of it, how to game it,” he said.